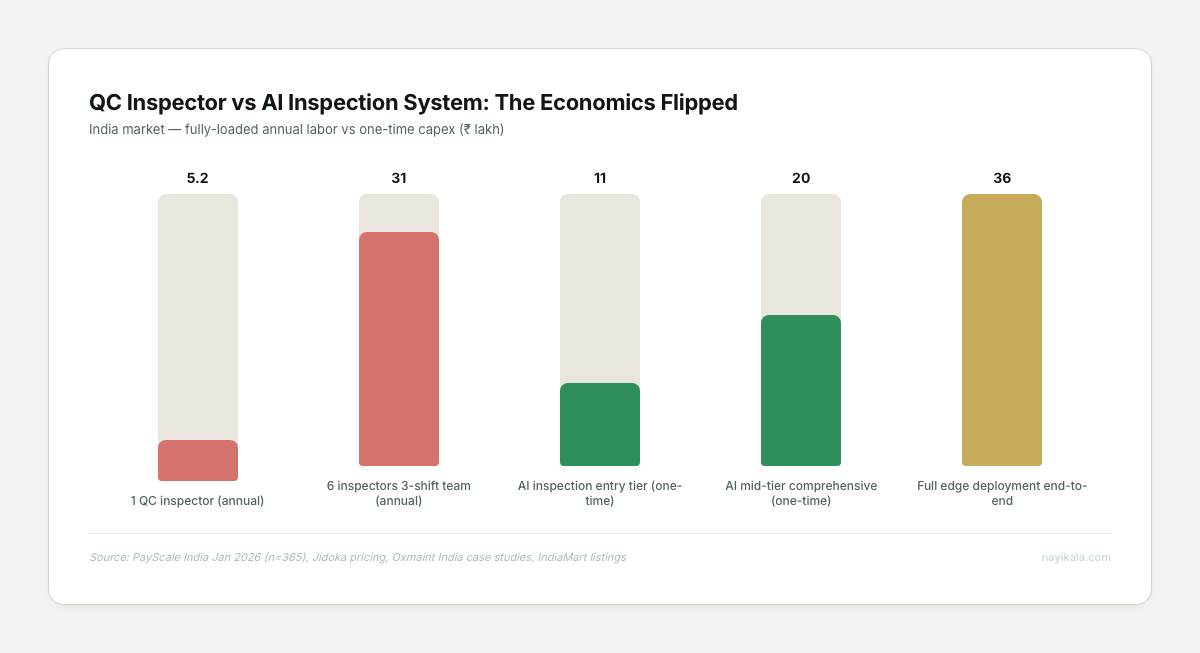

A working edge AI defect detection node now costs less than three months of one QC inspector’s fully-loaded salary. The reason most MSME factories still don’t have one isn’t the hardware. It’s the pipeline nobody talks about.

Walk into a mid-sized auto-component unit in Pimpri at 3 PM. Second shift is tightening up. The QC inspector at the final station has been on his feet since 6 AM, eyes on a lit bench, looking for cracks on forged rockers at 4 seconds per part. By 4 PM, his miss rate will have drifted by 15-20%. By 6 PM, it’s closer to 30%. The owner knows this. The customer — a Tier-1 supplying an OEM — knows this too, which is why they also inspect the same parts again when the shipment lands. Two QC stations, one part, and a rejection rate that still shows up in the monthly supplier scorecard.

Now price it the other way. A NVIDIA Jetson Orin Nano Super dev kit is $249 for 67 TOPS of edge compute (NVIDIA, Jan 2025). A 5MP Basler industrial camera lands at about ₹28,000. A full on-premise AI inspection deployment in India sits between ₹7-15 lakh at the entry band (Jidoka pricing, IndiaMart listings). A fully-loaded QC inspector in India — base salary plus PF, ESI, leave cover, recruitment — costs ₹4.8-5.6 lakh per year (PayScale India, Jan 2026 n=365). A 3-shift factory with two inspectors per shift is burning ₹28-34 lakh a year in QC labor alone, before you count the defects that slip through.

The economics flipped a year ago. The adoption curve did not.

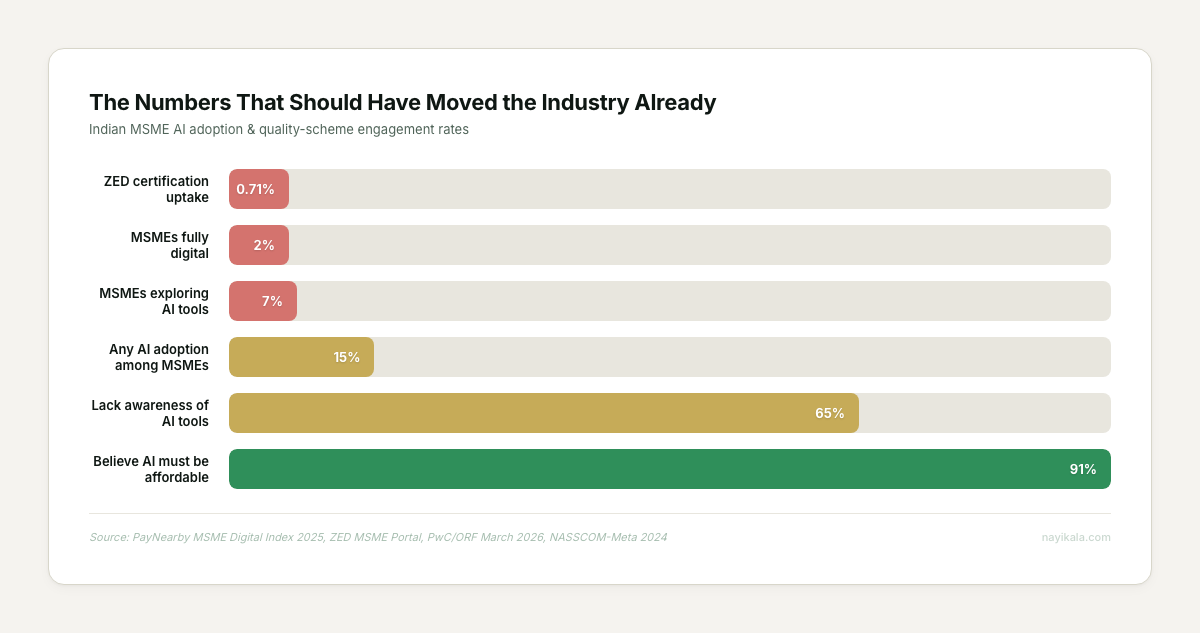

The numbers that should have moved the industry already

Only 7% of Indian MSMEs are actively exploring AI tools (PayNearby MSME Digital Index 2025). Broader "any AI" adoption sits at 15% (PwC/ORF, March 2026). ZED — the government’s flagship Zero-Defect-Zero-Effect scheme, free for women-led units — has 2.83 lakh certifications against ~4 crore registered MSMEs. That’s 0.71% participation.

The Oxmaint case studies from the last 18 months read like they were fabricated for a deck:

- Coimbatore textile mill (₹420 cr revenue): ₹48L invested, ₹64L/yr savings, 9-month payback, 133% Y1 ROI

- Nashik food processing: ₹24L in, ₹28L/yr out, 10-month payback

- Pune auto components: ₹32L in, ₹38L/yr reduced scrap, 119% Y1 ROI

And at the top of the stack: Tata Steel Kalinganagar — the first Indian factory named a WEF Lighthouse (2019) — unlocked $70-80M in value from analytics; their continuous caster strike rate moved from 67% to 90%. Siemens Amberg hit 99.9988% built-in quality and saved €3.6M a year on scrap. Foxconn’s NxVAE took cosmetic inspection accuracy from 95% to 99% and cut inspection labor 50%.

None of this is theoretical. So why is the Tier-2 MSME still running the same lit bench?

The problem isn’t the camera. The problem is the pipeline.

Every MSME owner we’ve talked to about AI inspection has asked the same first question: "How much for the camera?" The camera is not the product. The camera is one stage of eight.

A working defect detection system has to do all of this, in order:

- Capture — GigE or USB3 camera, calibrated lighting, hardware trigger

- Preprocess — lens correction, histogram normalization, ROI cropping

- Label — CVAT, Roboflow, or SAM2-assisted annotation

- Train — transfer learning off a foundation model (DINOv2, CLIP, SAM2, Florence-2)

- Optimize — quantization to INT8/FP16, pruning, ONNX or TensorRT export

- Edge-deploy — Jetson/Hailo with DeepStream or Triton inference

- Monitor — statistical tracking of confidence distributions, FP/FN rates

- Retrain — triggered by drift or set cadence, with an active-learning queue for low-confidence samples

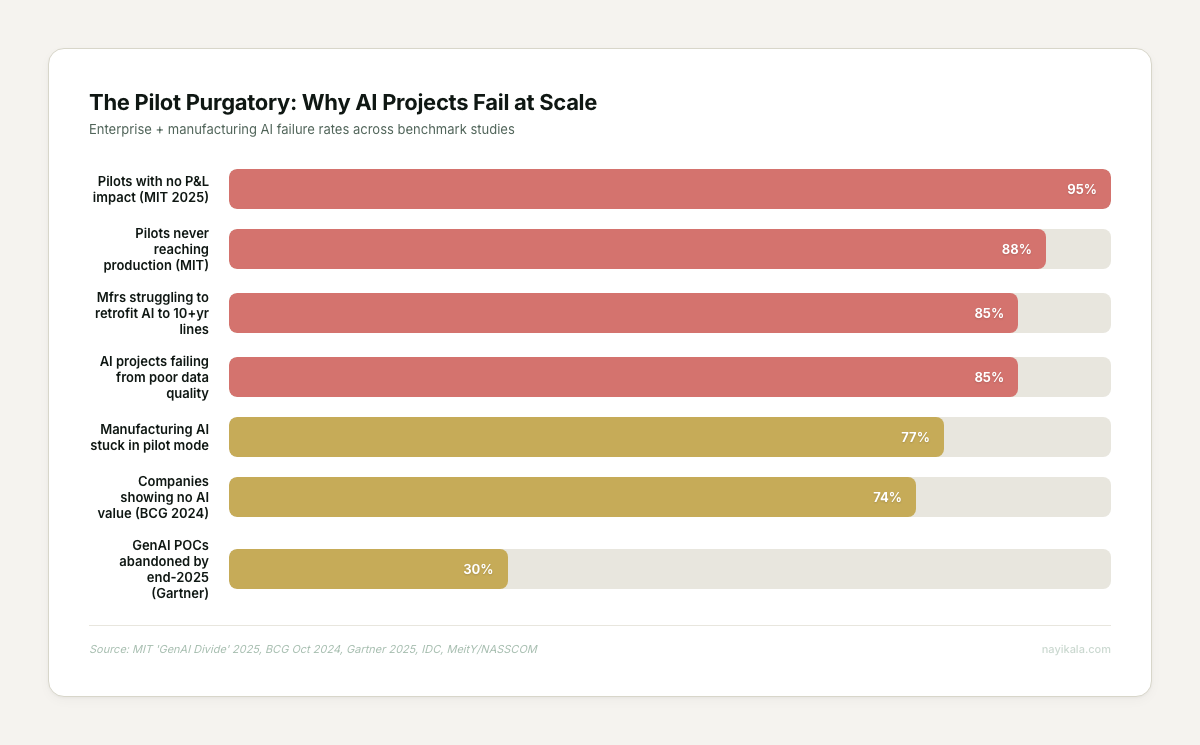

Stages 1 and 6 are what vendors sell. Stages 2, 3, 7, and 8 are what kill the system in month four. 88% of enterprise AI pilots never reach production (MIT "GenAI Divide" 2025). For every 33 AI pilots, IDC finds only 4 make it to live deployment. That 88% is not a technology ceiling. It’s a pipeline ceiling.

The specific failure modes we see most often:

- The training set was collected in one week. The lighting was right, the conveyor was clean, the supplier batch was uniform. Month two, a new coil of steel comes in, the surface finish shifts slightly, and the model’s false positive rate crosses 5%. Operators stop looking at the alerts within a week.

- Nobody owns retraining. The integrator walks off-site, the model is frozen in time, and every seasonal variation and new supplier part drags accuracy down.

- The data never existed. The MSME had no digitized defect log to begin with, so there’s no historical training set — 67% of enterprises report data silos, 54% cite poor data quality (MeitY/NASSCOM). Every "we don’t know our own defect distribution" conversation adds weeks to the build.

- The retrofit broke. 65% of manufacturers struggle to retrofit AI to 10+ year old lines (industry survey cited in MeitY/NASSCOM barrier analysis). Older PLCs need a protocol gateway — HMS Anybus, MOXA — to bridge Modbus TCP to OPC-UA so the reject arm actually fires within the 300ms budget at 60 m/min.

This is why the PwC/ORF MSME promoter quote keeps showing up in every conversation: "Show me the real value AI will deliver and the time frame within which I can realise it." That’s not a budget question. That’s a "who will still be responsible when the model drifts in December" question.

What you can do this week, for free

Before any quote, any vendor demo, any capex approval — there is a week of free work every MSME owner can do themselves. This is the work that determines whether the system will actually clear pilot.

Week 1: Digitize your defect ledger. For seven working days, log every rejection at every station. Not on paper. In a spreadsheet with four columns: date-time, part number, defect type, station. Most MSMEs we’ve seen run for years without knowing their top three defect classes by frequency. You cannot train a model, cost a vendor, or scope a pilot without this.

Week 2: Photograph 200 good parts and 50 bad parts. Phone camera is fine. Same angle, same lighting, same background. Separate folders: good/, defect_porosity/, defect_crack/, defect_dimension/. This is a dataset. It’s not a production dataset, but it’s enough to know whether your defect classes are visually separable at all — which is the single biggest predictor of whether any vision system will work on your line.

Week 3: Walk the line with a stopwatch. At what cycle time is the part presented? Is there a natural trigger point — a conveyor position, a sensor already there? How far is the nearest cable run to a power outlet? Is the inspection station covered or exposed to monsoon humidity? This is the rig you’d pay a systems integrator ₹2L to survey.

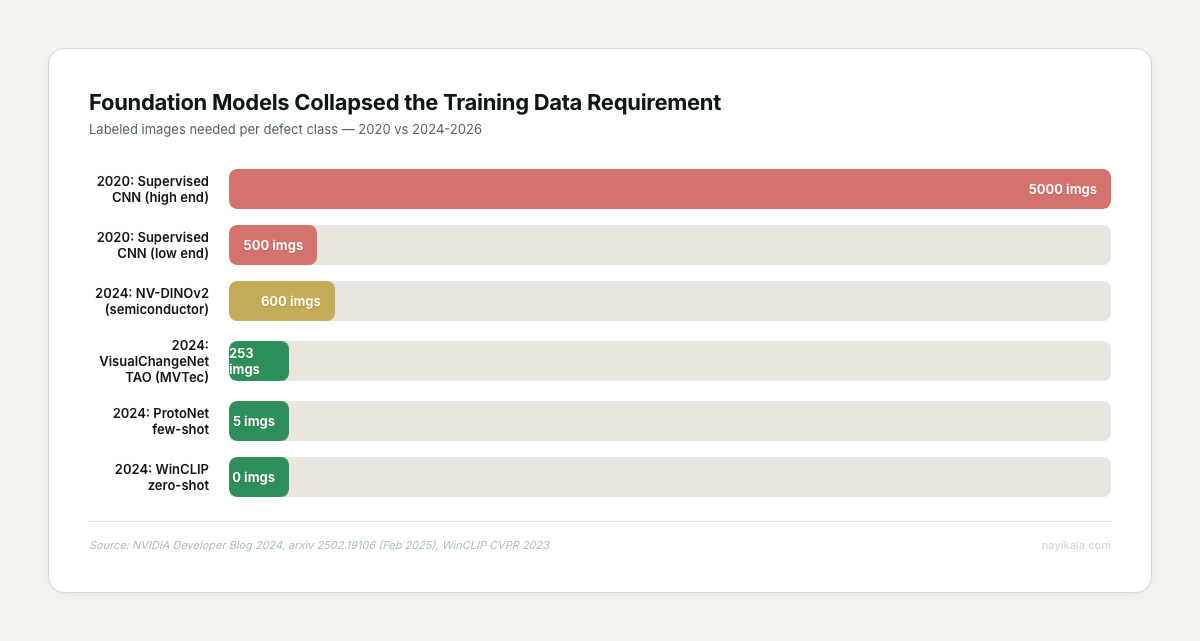

Foundation models changed the training-data math in a way most vendor decks haven’t caught up with. NVIDIA’s NV-DINOv2 hit 98.51% accuracy on semiconductor wafers with 600 samples. VisualChangeNet via NVIDIA TAO hit 99.67% with 253 images from the MVTec-AD benchmark. In 2020, you needed 500-5,000 labeled samples per defect class. In 2026, you need 20-100. Your phone-camera dataset from Week 2 is no longer laughable. It’s genuinely a starting point.

Where it gets harder

Then the pipeline surfaces.

Lighting determines 70% of optical inspection success (Keyence / Roboflow lighting-selection guides). Backlight for edges and silhouettes, dark-field for scratches, dome for curved shiny surfaces, coaxial for flat polished, structured laser for 3D profile. Picking the wrong geometry is not a small error — it’s the difference between 99% accuracy and 60%. Lighting enclosures to block ambient drift are infrastructure, not accessories.

At 60 m/min line speed the latency budget from capture to reject-arm actuation is 300ms. Every hop fits inside that: capture, preprocess, inference, decision, PLC signal. This is why cloud-dependent systems are non-starters on a production line — edge-local inference is mandatory at 99.9%+ uptime. Integration protocol matters: OPC-UA for structured plant communication, Sparkplug B over MQTT for MES/cloud pub-sub, EtherNet/IP or Profinet for deterministic PLC integration. Older lines need a gateway; that’s a design decision, not a purchase.

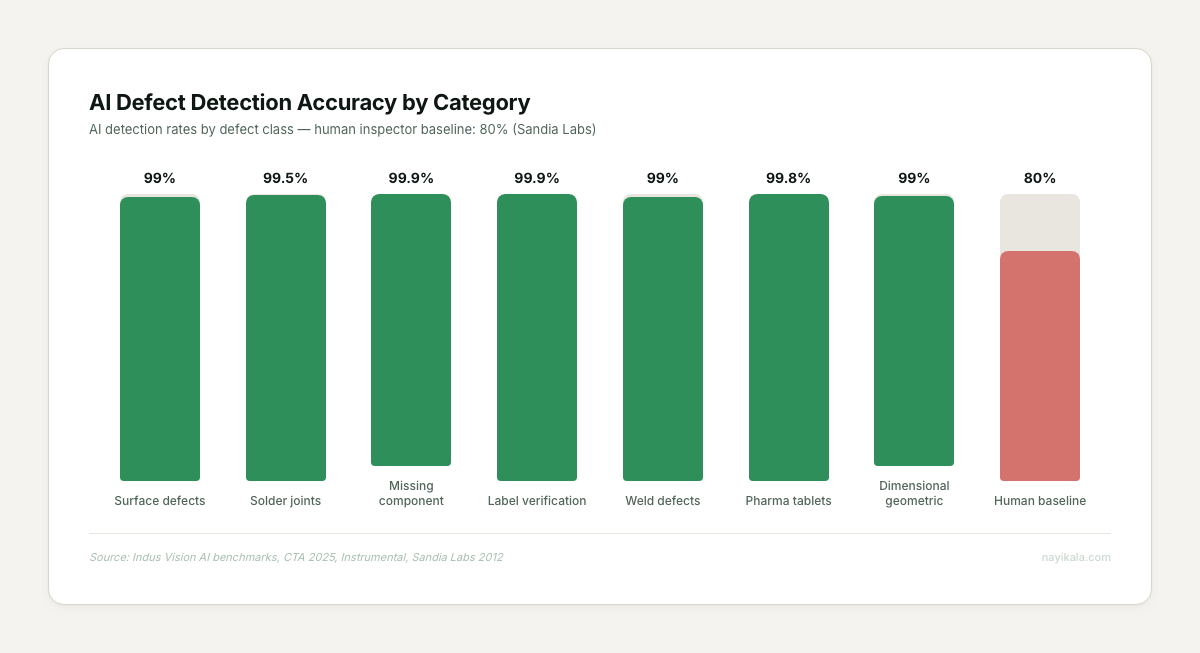

Then the class-imbalance trap. When defects are <0.1% of output, a model that predicts "good" every time scores 99.9% accuracy and catches zero defects. This is why vendors who show you raw accuracy numbers without precision-recall curves are showing you nothing.

Mitigation is anomaly detection framing (train on normals only), synthetic oversampling with diffusion augmentation, and threshold calibration against your actual operator alert-fatigue threshold — which, on a high-throughput line, is around 5% FPR before operators start tuning out.

Mitigation is anomaly detection framing (train on normals only), synthetic oversampling with diffusion augmentation, and threshold calibration against your actual operator alert-fatigue threshold — which, on a high-throughput line, is around 5% FPR before operators start tuning out.

And then the thing no one puts on the quote: DPDP compliance. Under the DPDP Act 2023 + Rules November 2025, factory CCTV that captures identifiable workers is processing personal data. The factory owner is the Data Fiduciary, not the vendor. Compliance deadline is May 13, 2027. Privacy-by-design means camera FOV enclosed at the part-inspection angle only, raw frames auto-deleted in 24-48 hours, worker consent and notice obligations documented — even under employment-context legitimate-purpose exception. STQC CCTV certification became mandatory in April 2025. Most vendors we’ve looked at are not yet routing their systems through this.

The vendor ecosystem has not caught up either. Jidoka (Chennai, 2018), Lincode, Qualitas, Ripik.ai — all strong teams, all building for Tier-1 auto, pharma, steel, aerospace. Their named customers are Nexteer, ZF Rane, TVS Sundaram Clayton, Tata Motors, Maruti Suzuki, ISRO suppliers. There is no MSME-tier product line from any of them. Tracxn shows 282 active CV companies in India and a 90% funding collapse in the segment through Sep 2025 ($100M → $8.67M). The vendor side is consolidating around enterprise, not expanding toward the Tirupur powerloom.

The only reliable adoption trigger

Across every MSME AI vision deployment we’ve tracked in India, one signal predicts adoption better than cost, awareness, or scheme availability: a global buyer asking for it. Coimbatore textile units exporting to Tirupur-via-EU have moved. Surat packaging units with US retail buyers have moved. Pune auto-component MSMEs with Tier-1 contracts have moved. MSME exports are 48.58% of India’s total; exporting MSMEs grew from 52,849 to 1,73,350 in four years. Those are the ones adopting.

The domestic-market MSMEs without a buyer forcing the question are not moving. Not because the ROI isn’t there — the Oxmaint Pune case proves it is — but because the pipeline work is invisible until it breaks, and with ₹8.1 trillion stuck in delayed payments (Economic Survey 2025-26) and a ₹30 lakh crore credit gap (Credable, 2024), no MSME owner is writing capex checks for an invisible risk.

The real design question isn’t which camera or which model — it’s who owns the retraining queue, the drift thresholds, and the DPDP breach-notification path twelve months after the integrator has left the floor.

Related reading

← All posts