The gap between AI enthusiasm and AI ROI in Indian MSMEs isn’t a technology problem — it’s a process problem wearing a technology mask. Here’s how to diagnose whether your business is actually ready, and what to fix before you spend a rupee on tools.

The Tool You Bought Last Quarter

Somewhere in your tech stack right now, there is an AI tool nobody uses. Maybe it is the chatbot you bolted onto your website that answers three questions correctly and fumbles the fourth. Maybe it is the CRM’s “AI-powered insights” tab that no one on your team has opened since the free trial converted. Maybe it is the ₹4,000/month subscription your operations head signed up for after a LinkedIn demo, used for two weeks, and quietly stopped mentioning in stand-ups.

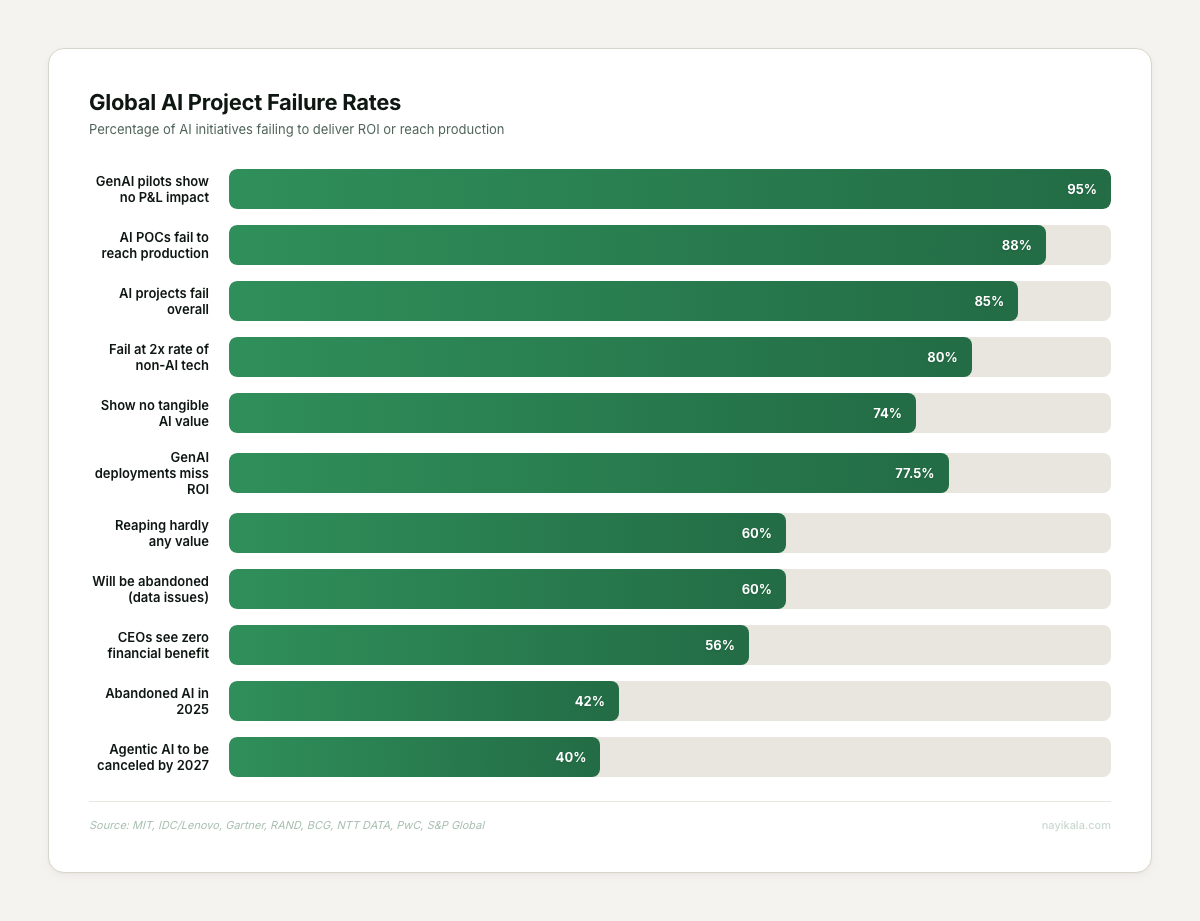

You are not alone. 56% of CEOs globally report zero financial benefit from AI (PwC 29th Annual CEO Survey, January 2026, 4,454 CEOs across 95 countries). Not negative ROI. Not “too early to tell.” Zero.

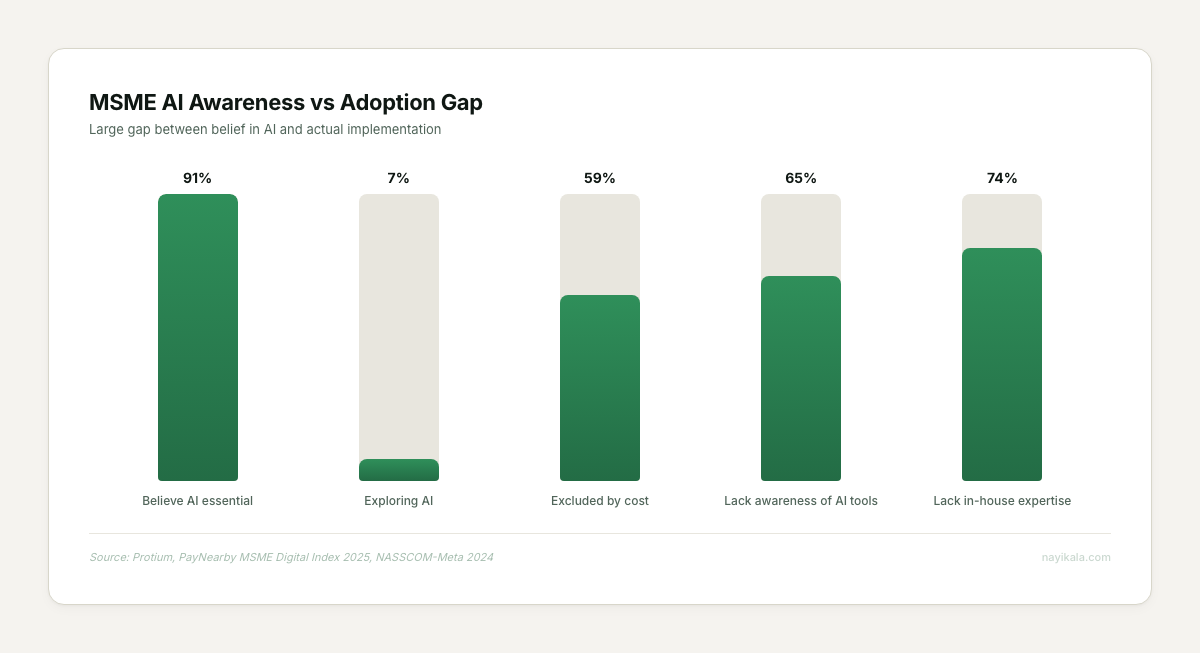

And that is the global number — companies with data teams, with cloud infrastructure, with budgets to burn through pilots. For Indian MSMEs, the picture is starker. Only 7% of MSMEs have even explored AI, according to PayNearby’s 2025 MSME Digital Index surveying over 10,000 businesses. Meanwhile, 91% say they believe AI is essential (Protium survey, same year). That is an 84-point gap between belief and action. And the businesses that have crossed into action are mostly lighting money on fire.

This is not an article about whether AI works. It does. Dunzo cut support response times from 4 minutes to 46 seconds. BigBasket reduced perishable inventory wastage by 35%. Razorpay’s AI routing engine is designed to prevent Rs 7,000 crore in annual payment failures (company-reported, 2025). AI works — when it is deployed against the right problem, with the right data underneath it, inside a process that was already understood before anyone typed “AI” into a search bar.

The question is whether your business is ready for it. Not philosophically. Operationally.

The Broken Diagnostic

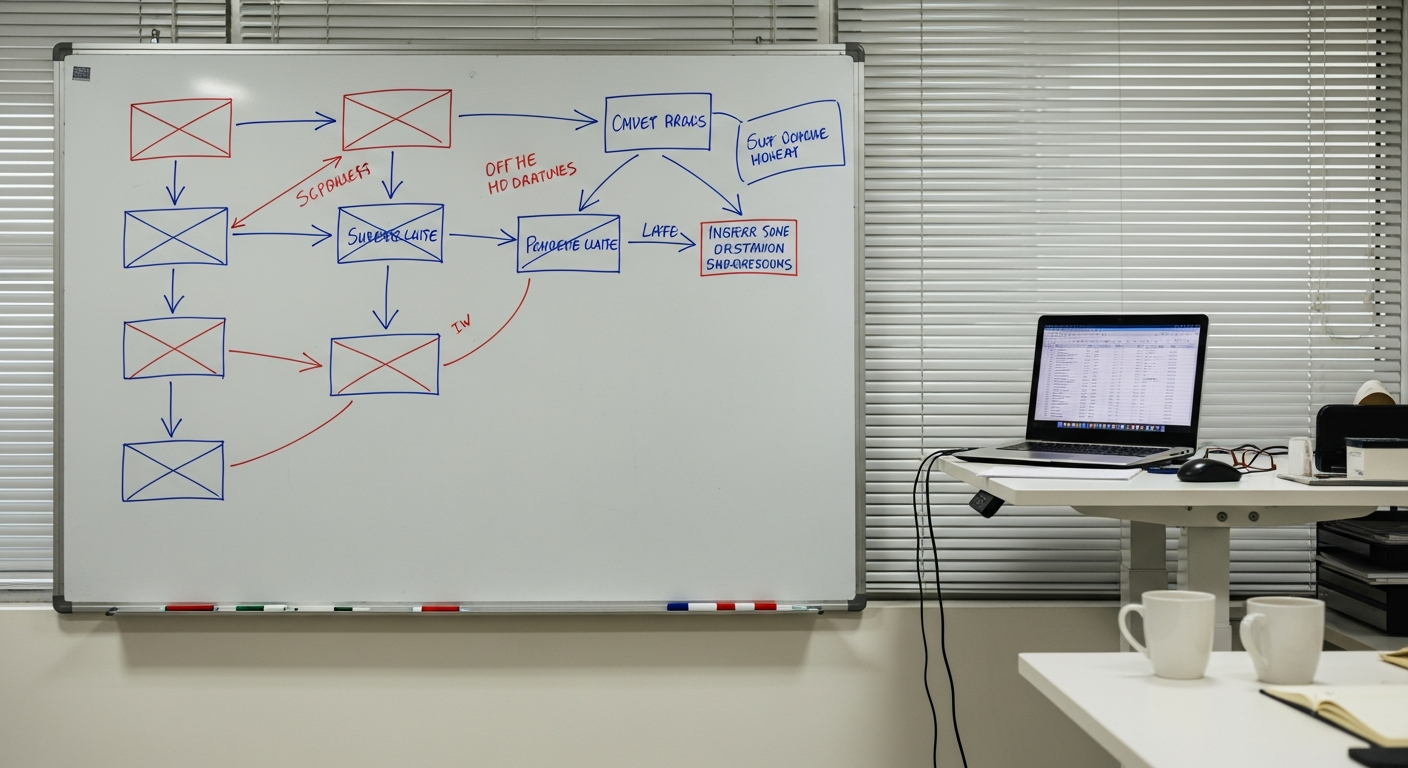

Here is what we have seen in the businesses that came to us after a failed AI project. The pattern is almost identical every time.

A founder reads about Zomato’s Nugget platform handling 15 million monthly support interactions with 80% autonomous resolution. Or they hear that Meesho handled 40% more customer service traffic without hiring a single new agent. They think: if Zomato can automate 80% of support, surely I can automate my 50 daily WhatsApp inquiries.

So they buy a chatbot tool. They spend a weekend feeding it their FAQ document. They connect it to WhatsApp Business — because 97% of Indian MSMEs already use WhatsApp or WhatsApp Business (PayNearby 2024). They launch it on Monday. By Wednesday, three customers have complained about getting nonsensical responses. By Friday, the operations manager is manually answering every message again anyway, now with the extra step of checking what the bot said first.

The tool was not the problem. The problem was the problem.

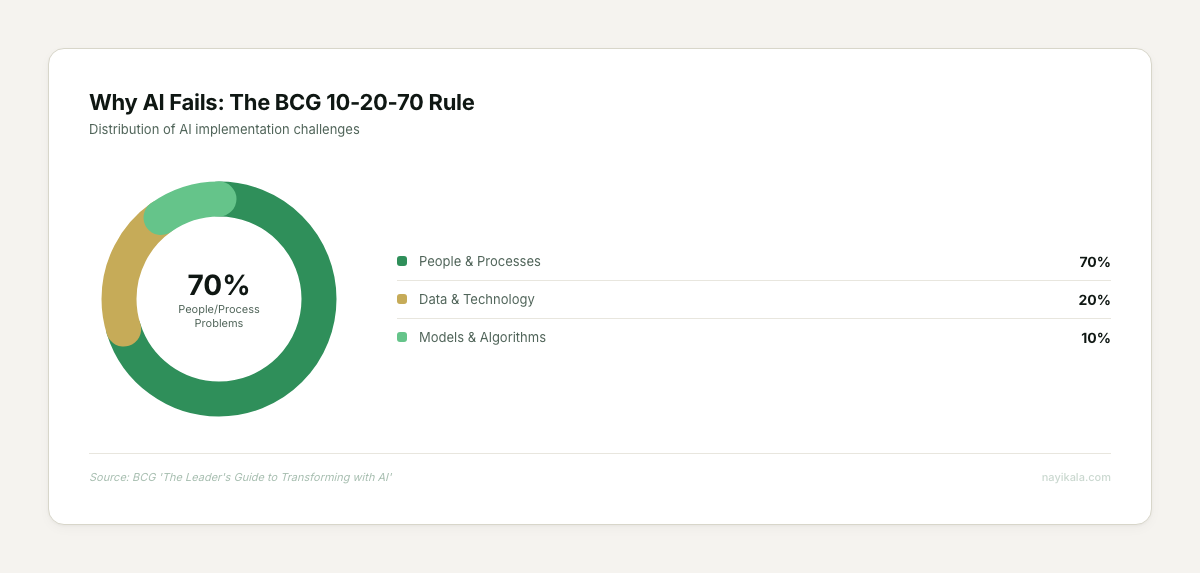

RAND Corporation studied AI project failures across 65 in-depth interviews and found that “stakeholders often misunderstand what problem needs to be solved.” Problem definition failures outweigh all technical failures combined. BCG puts it in a ratio they call the 10-20-70 rule: 10% of AI implementation effort is the algorithm, 20% is the data and technology backbone, and 70% is people and processes. The businesses that fail — and 74% of companies show no tangible AI value according to BCG’s survey of 1,000 CxOs — are spending 90% of their energy on the 10%.

The chatbot vendor did not ask you to map your actual customer inquiry flow before deploying. They did not ask how many of your support queries require account-specific data that lives in a spreadsheet your accountant updates on Tuesdays. They did not ask whether your team even agrees on what a “resolved” inquiry looks like.

One detail that keeps coming up in the businesses we have worked with: the SOP is fiction. As Skan.ai put it, “Most SOPs document what should happen, not what actually happens.” You cannot automate a process you have not actually mapped. Not the version in the Google Doc from 2023. The version that happens at 3 PM on a Thursday when your best salesperson is on leave.

What You Can Do This Week

Before you evaluate any AI tool, run this diagnostic on your own business. It costs nothing except honesty.

Map One Process End-to-End

Pick the process that frustrates you most. Customer inquiries. Order fulfillment. Invoice follow-up. Whatever it is.

For one full week, document every step as it actually happens. Not the SOP version. The real version. Write down: where does the trigger come from (WhatsApp message, missed call, email, walk-in)? Who sees it first? How long before someone acts on it? What information do they need to act? Where does that information live? How many handoffs happen before it is resolved?

You will find things. Every business we have run this exercise with finds things. The inquiry that sat in a WhatsApp group for 6 hours because the person who usually handles it was in a meeting. The order that required checking three different spreadsheets because inventory lives in one, pricing in another, and customer history in the head of a person who has been here for four years.

Count Your Data Sources

Open a blank sheet. List every place your business stores information. Tally forms. Google Sheets. WhatsApp chats. The notebook at the billing counter. The accountant’s laptop. Your personal phone’s photo gallery where you photograph handwritten orders.

Now circle the ones that talk to each other. In most of the businesses we have mapped, spreadsheets run alongside the CRM and almost none of them talk to each other. Your circles will probably be islands too.

AI needs connected data. Not perfect data — connected data. If the information an AI agent would need to answer a customer’s question lives across four disconnected places, no amount of prompt engineering fixes that.

Measure Your Response Baseline

For those same five days, track one number: time from customer inquiry to first human response. Across every channel. WhatsApp, Instagram DMs, email, phone, website form.

Dukaan’s AI cut first response time from 1 minute 44 seconds to instant. But that number only meant something because they knew the 1:44 baseline. You need your baseline before you can evaluate whether any tool moves it.

Write the number down. It is probably worse than you think.

Where It Gets Harder

Everything above — process mapping, data inventory, response baselining — is free and invaluable. It will make your business better regardless of whether you ever deploy an AI system. But it also reveals the structural layer where things get genuinely complex.

When you map your data sources, you will likely discover that connecting them requires more than a Zapier automation. Your customer data in WhatsApp Business has a different identifier structure than your billing records in Tally. Your product catalog in the Google Sheet does not match the naming conventions your warehouse uses. Stitching these into a single queryable layer — a data pipeline — is an engineering problem, not a configuration problem.

Then there is the consent layer. DPDP Rules 2025 roll out in phases: consent manager provisions by November 2026, full algorithmic compliance by May 2027, with penalties up to Rs 250 crore per violation. Any AI system that touches customer data — and a WhatsApp chatbot definitionally touches customer data — needs consent infrastructure baked into its architecture. Not a checkbox on a form. An actual consent capture point with purpose limitation, storage rules, and withdrawal mechanisms that propagate across every system holding that data.

In the businesses we have built these systems for, the pattern is the same: AI is the last layer. The process architecture — which queries route to the bot, which escalate to a human, how consent propagates when a customer switches channels — is where the ROI actually lives. The model selection is a Thursday afternoon decision. The pipeline design is a three-week one.

Whether the 91% converge on working systems or another round of abandoned pilots depends on the data pipeline architecture and consent routing underneath — and those are engineering decisions, not tool selections.

← All posts