India cut 55,000+ tech jobs in Q1 2026. The survivors inherited triple the workload with no raise. Companies that automate without a plan fail at the same rate as those that don’t automate at all.

It is 9:45 PM on a Tuesday. Your operations lead is still in the office because the person who used to handle vendor reconciliation was let go in January, and someone has to do it. She is also handling customer escalations that used to go to a team of three. She has not taken a day off in six weeks. She is not going to say anything about it because she watched twelve people get walked out, and she is grateful she still has a job.

This is happening in thousands of Indian businesses right now. Not at TCS or Infosys — they have retention bonuses and reskilling budgets. It is happening at the 40-person company that cut to 15 because the funding environment changed. At the services firm that lost two clients and shed half the team. At the D2C brand that automated customer support with a chatbot and then realized someone still needs to handle the cases the chatbot cannot.

The layoff headlines are about the people who left. This post is about the people who stayed.

The Survivor Math Does Not Work

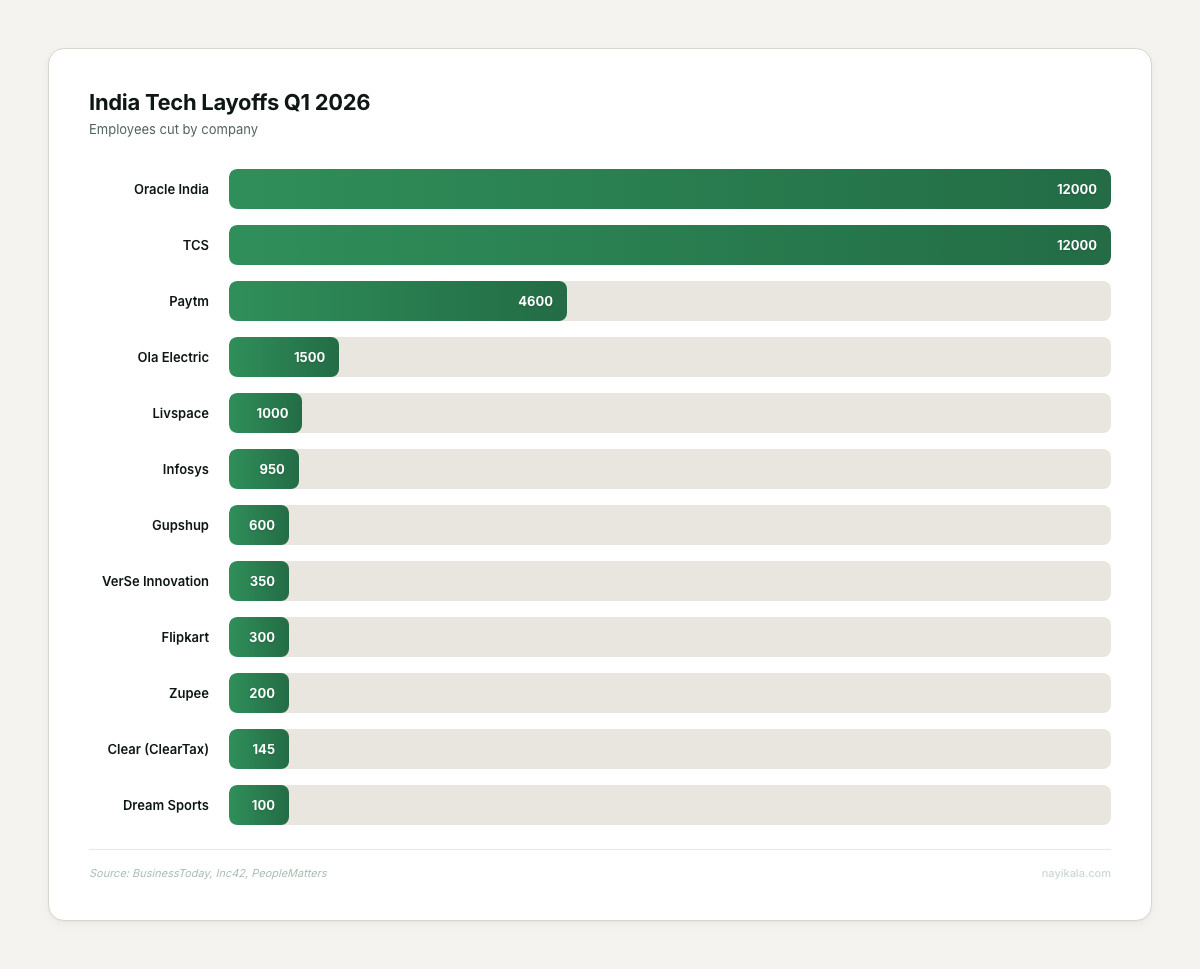

India lost 55,000+ tech jobs in Q1 2026 alone. Oracle India terminated 12,000 employees — 40% of their Indian workforce — via a 6 AM email on March 31 (BusinessToday, April 2026). TCS shed 30,906 headcount over two quarters. Infosys cut 950+ positions including 700 trainees. Flipkart, Zupee, Livspace, Dream Sports — the list runs long.

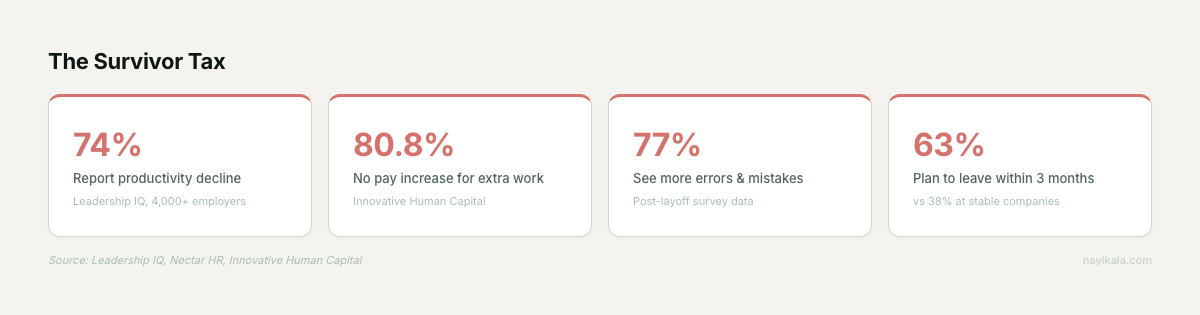

But here is the number nobody puts in the headline: 80.8% of survivors who took on additional work received no pay increase (Innovative Human Capital, 2025 survey of post-layoff workforces — US data, but directionally consistent with what we see in India). Not a smaller raise. No raise at all.

The businesses we have seen go through this follow a predictable pattern. First, the cuts feel surgical — duplicate roles, underperformers, that team that was never quite justified. Then the work those people did lands on the remaining team’s desks. Not redistributed with a plan. Just... landed. The finance person starts doing procurement follow-ups. The account manager starts doing reporting. The ops lead starts doing everything.

In the teams we have worked with post-layoff, productivity drops are visible within weeks. Leadership IQ found similar patterns at scale — 74% of survivors reported productivity declines, 77% reported more errors and mistakes (survey of 4,000+ employers). This is not soft sentiment data. This is operational degradation measured across thousands of companies.

The compounding effect is brutal. Nearly one-third of tech companies conducted two or more layoff rounds between 2023 and 2025, and 70% of repeat layoffs happen within 12 months of the first round (Marketplace, March 2026). Each round doubles the impact on the people left behind. Recovery takes 18 to 24 months — if there is no second round.

In India specifically, 83% of tech workers report burnout (All Things Talent / Indeed survey of 5,000+ IT workers across Bengaluru, Hyderabad, and Pune, 2024). One in four logs 70+ hours a week. Only 15% said their employer offered any burnout prevention program. These are not abstract wellbeing metrics. This is the team that is supposed to be doing more with less.

The Automation Trap

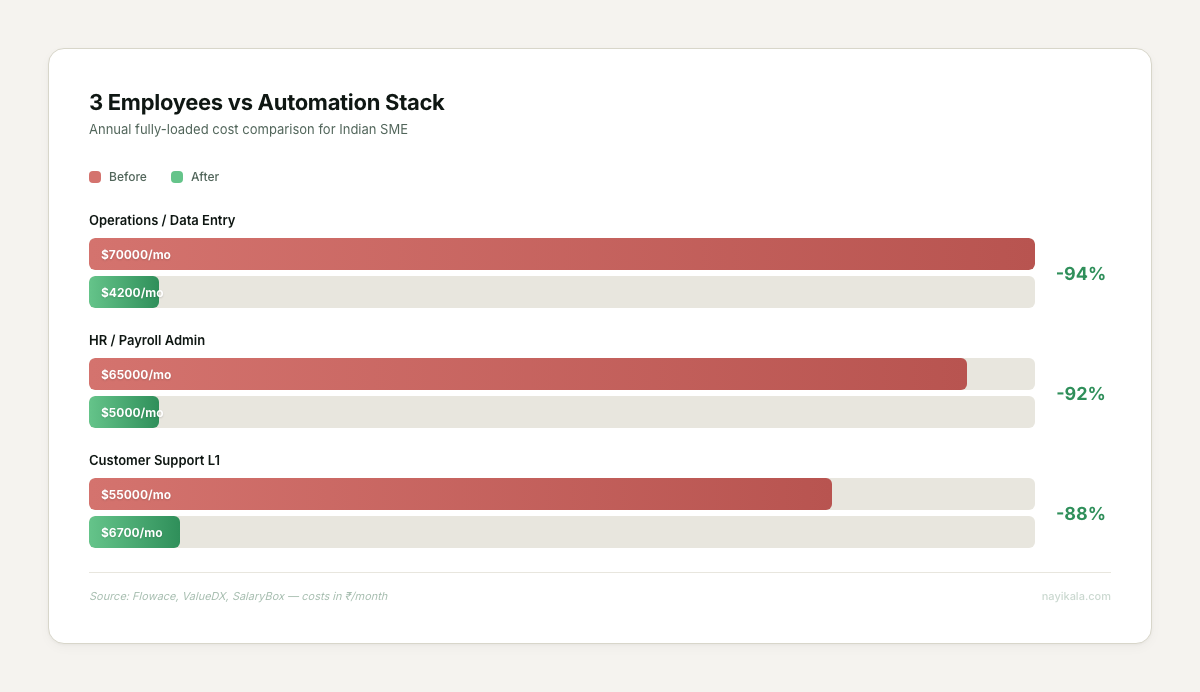

So the obvious move is to automate. And a lot of companies are doing exactly that — almost every business we talk to is planning some kind of automation investment, a pattern that tracks with Automation Anywhere’s 2024 finding that 63% of Indian enterprises planned to invest in intelligent automation and GenAI within 12 months. The pitch is clean: replace the headcount you cut with software. Pay for tools instead of salaries. In the cost models we have built for clients, three employees doing data entry, payroll admin, and L1 support cost roughly Rs 19.5-21 lakhs per year fully loaded. An automation stack handling similar work costs about Rs 1.9 lakhs per year. The math is real.

Except the math only works if the automation actually works. And the data on that is ugly.

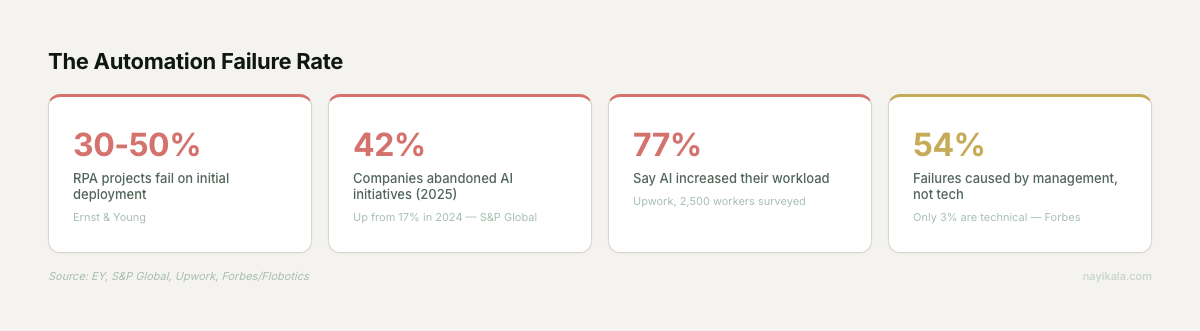

30-50% of RPA projects fail on initial deployment (Ernst & Young). More than 50% fail to scale beyond 10 bots. 42% of companies abandoned most of their AI initiatives in 2025 — up from 17% in 2024 (S&P Global). And perhaps the most telling data point: 77% of employees report AI actually increased their workloads (Upwork survey of 2,500 workers including 1,250 C-suite). Nearly half said they had no idea how to achieve the productivity gains their employers expected.

Klarna is the cautionary tale the industry should be studying. In early 2024, their AI handled 2.3 million conversations per month, cut resolution time from 11 minutes to under 2 minutes, replaced the equivalent of 700 agents, and saved $40 million annually. By mid-2025, the CEO admitted the strategy had "gone too far." Quality dropped. Customer complaints rose. They are now rehiring humans.

The pattern is consistent: automation works for the simple, predictable, high-volume layer. It fails — sometimes catastrophically — at nuance, escalation, and anything that requires judgment. The companies that cut the humans first and figure out the automation boundary later are the ones that end up with neither.

What You Can Do Monday Morning

Before buying a single tool or talking to a single vendor, do this.

Map the actual workload distribution

Take your current team. For one week, have each person log what they actually spend time on — not their job description, their actual hours. You will almost certainly find that 2-3 people are carrying invisible load from roles that were cut. You will also find that some of what they are doing is pure process friction: copy-pasting between systems, chasing approvals over WhatsApp, manually generating reports that nobody reads past the second slide.

Identify the three highest-volume, lowest-judgment tasks

These are your automation candidates. Not "customer support" as a category — that is too broad. Something like: "responding to delivery status inquiries on WhatsApp" or "matching incoming payments to invoices in the Tally export." Tasks that are repetitive, rule-based, and currently eating 10+ hours a week from someone who should be doing something else.

Run the Klarna test on each candidate

Ask: if this automation handles the task incorrectly 5% of the time, what breaks? If the answer is "a customer gets a wrong delivery status and calls back," that is manageable. If the answer is "we send an incorrect invoice to a client," that is a different risk class. The boundary between automatable and not-automatable is almost always about the cost of errors, not the complexity of the task.

Measure your current response times

Pick your most critical inbound channel — WhatsApp, email, website form, whatever carries the highest-value inquiries. Measure the gap between when a message arrives and when a human first responds. Do this for 50 messages. The median number will tell you more about your operational health than any dashboard.

Where It Gets Harder

The steps above will get you real clarity on where automation fits. But the implementation is where most companies stall, and it is not because the tools are hard to set up.

The hard part is the integration layer. Your team’s work does not live in one system. It lives across WhatsApp conversations, Tally entries, Google Sheets that someone built three years ago and nobody fully understands, a CRM that is half-populated, and email threads that contain critical approvals with no audit trail. Automating a task means connecting these systems in a way that data flows correctly, consistently, and without silent failures.

Silent failures are the real killer. An automation that stops working and throws an error is fine — someone notices and fixes it. An automation that keeps running but starts producing slightly wrong outputs — matching payments to the wrong invoices, sending follow-ups to customers who already responded, marking leads as contacted when the message never went through — that erodes trust in the system and usually is not caught until the damage has compounded for weeks.

Then there is the routing logic: which cases the automation handles, which get escalated to a human, and what information travels with the escalation so the human does not start from zero. The businesses that get this wrong end up with a team that is simultaneously managing the original workload and babysitting the automation — which is worse than no automation at all.

54% of technology implementation failures are caused by poor management, not technical problems (Forbes via Flobotics). Only 3% are caused by the technology itself. In every automation deployment we have done, the gap between buying the tool and having it actually reduce the team’s load was a design problem — what connects to what, what triggers what, what happens when something breaks, and who sees the break first.

The orchestration layer between your team’s judgment and your automation’s throughput is where the actual engineering lives.

← All posts