The IT Rules 2026 mandate watermarks and provenance metadata on every AI-generated image, video, and voice note your business produces. Text is exempt. Visual and audio content is not. Here’s what that actually means for your operations.

Your designer used Midjourney to create a product banner last week. Your social media person ran a Canva AI background removal on twelve product shots. Someone on the team sent an AI-generated voice note to a WhatsApp broadcast list of 400 customers.

None of that had a watermark. None of it carried provenance metadata. And as of February 20, 2026, all of it falls under India’s new synthetic content regulations.

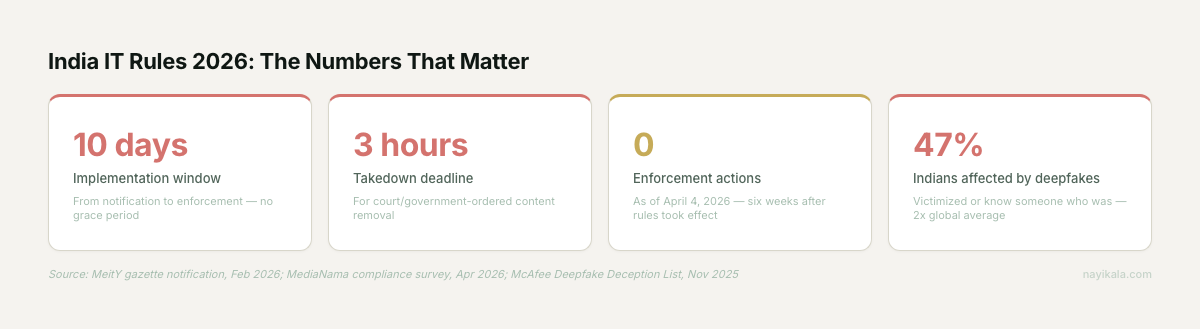

The IT Rules 2026 Amendment landed with a 10-day implementation window. No grace period. The Internet Freedom Foundation and the US-India Strategic Partnership Forum both called the timeline "operationally unfeasible" (IFF public statement, February 2026). MeitY’s response was direct: "citizen safety outweighs platform discomfort."

Most business owners we talk to haven’t read the notification. The ones who have are confused about what applies to them. Fair enough -- the rules are dense, the definitions are new, and the enforcement picture is still forming. But the obligations are live. And the content your team produced this morning may already be in scope.

What the Rules Actually Say

The amendment introduces a legal category called Synthetically Generated Information (SGI) -- audio, visual, or audiovisual content created or altered algorithmically to appear real and indistinguishable from a natural person or real-world event (Rule 2(1)(wa)).

Here is the part that matters for most Indian SMEs: text-only AI content is explicitly excluded from SGI. Your ChatGPT-drafted WhatsApp messages, your AI product descriptions on Meesho, your blog posts written with Claude -- all outside scope.

What is in scope: AI-generated images, AI voice notes, AI video, AI-modified product photos where the modification goes beyond routine editing. Color correction and brightness adjustments are carved out. AI background replacement on a product photo is not.

The labeling requirements are specific

- Visual SGI must carry a visible watermark prominently displayed on-screen

- Audio SGI must include a spoken disclaimer at the beginning identifying it as synthetic

- All SGI must embed permanent provenance metadata with a unique identifier traceable to the originating platform

- That metadata must be "irremovable" -- Rule 3(3)(b) explicitly prohibits stripping, suppressing, or modifying it

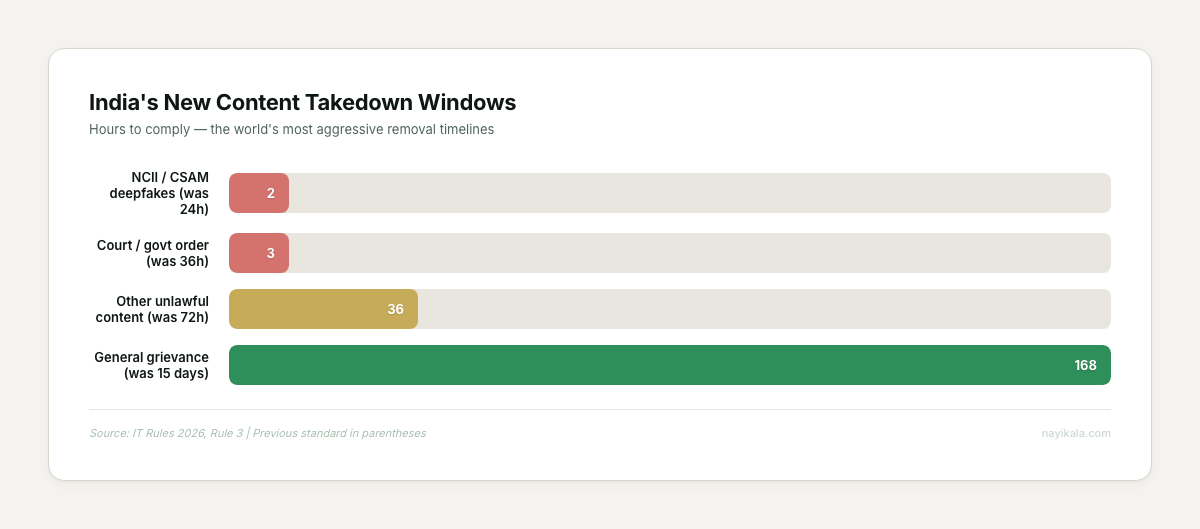

The takedown windows got aggressive

Court or government-ordered content must come down in 3 hours (previously 36). Non-consensual intimate imagery and CSAM deepfakes: 2 hours. That 2-hour window is, to our knowledge, the shortest mandatory content removal timeline imposed by any government globally.

Who carries the compliance burden

This is the critical distinction most coverage misses. The 2026 Rules’ synthetic media obligations fall primarily on intermediaries -- platforms hosting others’ content -- not on publishers producing their own content. Instagram carries the labeling obligation for the AI banner your business posted, not your business directly.

But. If your business runs any platform where users can post content -- a community forum, a review section, a marketplace with seller-uploaded images -- you are an intermediary. And intermediary obligations apply in full.

There are no small business exemptions. A 5-person generative AI startup faces identical watermarking rules as Adobe or OpenAI. No revenue thresholds, no startup carve-outs, no scaled compliance schedules.

What You Can Do Monday Morning

The compliance picture is messy, but the audit is straightforward. Here is what you can do this week without spending a rupee.

Map your AI content surface area

Open a spreadsheet. For one week, log every piece of content your team produces or publishes that involved an AI tool. Columns: date, content type (image/video/audio/text), AI tool used, channel published on, whether it carries any AI disclosure.

The businesses we’ve run this audit with are consistently surprised. The marketing team knows about the Midjourney banners. Nobody tracked that the customer support lead has been sending AI-generated voice notes on WhatsApp for three months.

Separate text from everything else

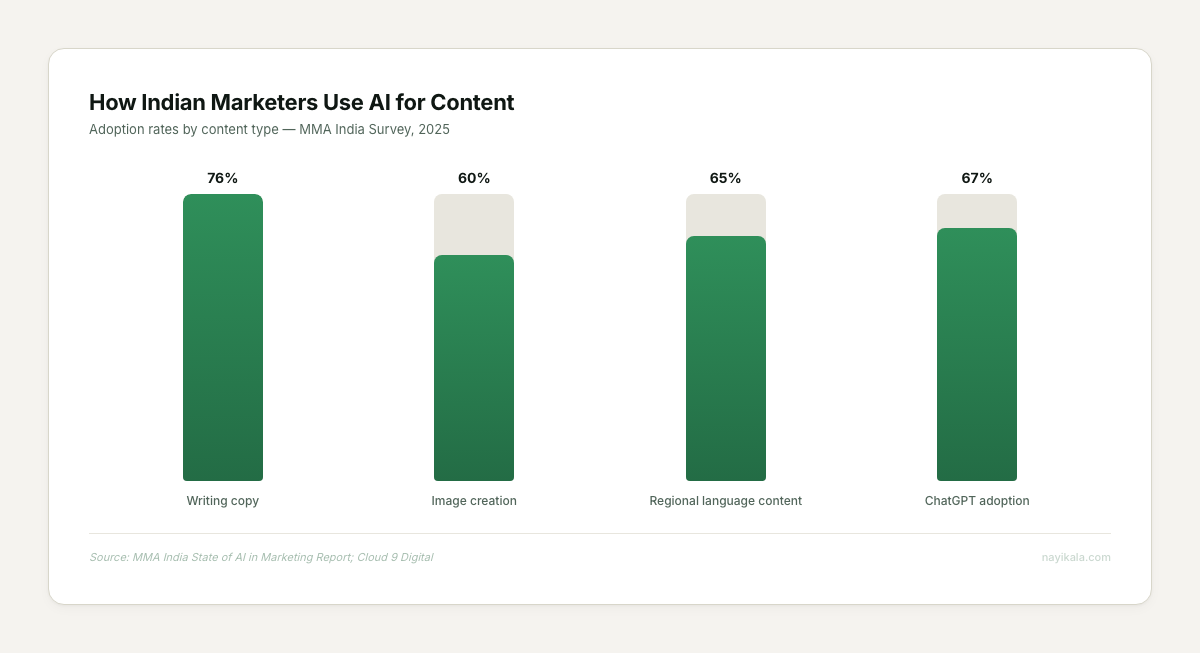

Text is out of scope. That is most of what Indian SMEs produce with AI -- according to an MMA India survey from 2025, 76% of Indian marketers reported using AI primarily for writing copy. If your AI usage is purely text-based, your compliance exposure under the SGI rules is minimal.

The risk sits in the visual and audio layer. The same MMA India survey puts AI image creation adoption at 60%. That Diwali campaign with AI-generated festival graphics. The AI product photos for your Shopify store. The synthetic voiceover on your Instagram Reel. All in scope.

Check your intermediary status

Ask yourself one question: does your platform allow users to upload and share content that other users can see? If yes, you are an intermediary under the IT Act. If no -- if you only publish your own content -- the labeling obligation sits with the platforms you publish on, not with you.

If you are an intermediary, you need a Grievance Officer. This can be the founder. They must be India-resident. Their name and contact details must be published prominently on your website. Complaints must be acknowledged within 24 hours and resolved within 15 days (7 days for certain categories under the 2026 amendment).

Start the metadata habit now

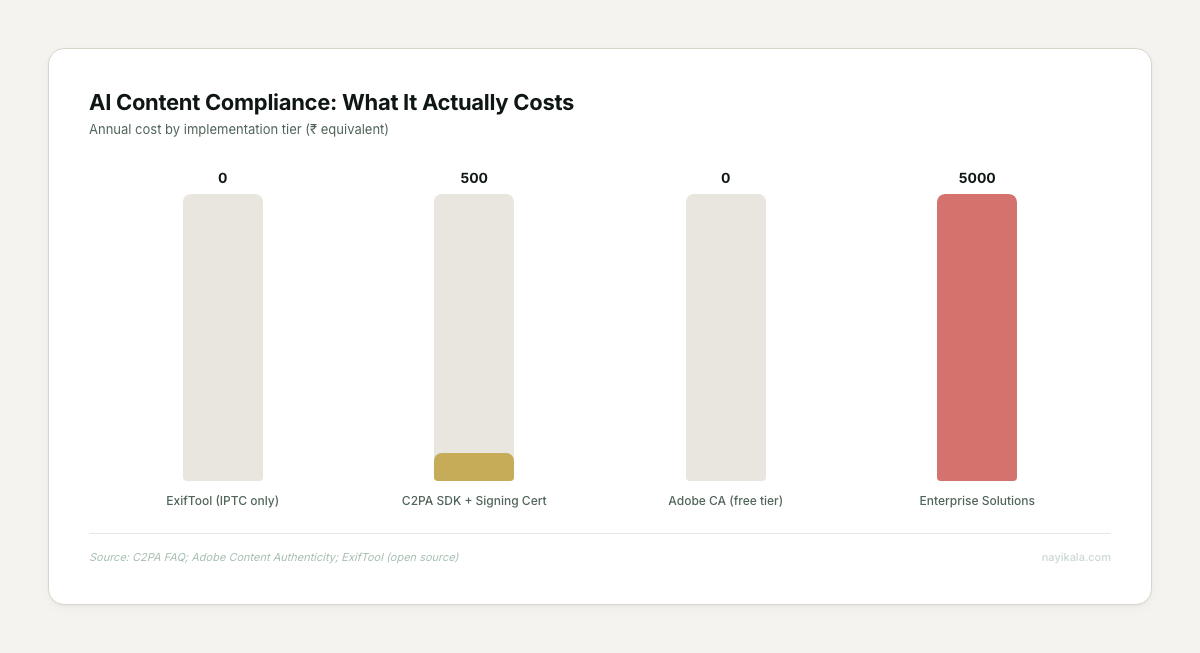

For any AI-generated image your team creates, use ExifTool (free, open source) to embed IPTC metadata tagging the AI tool used. The new IPTC Photo Metadata Standard 2025.1 includes specific fields: AI System Used, AI System Version Used, AI Prompt Information. ExifTool version 13.40+ supports these.

This costs nothing. A developer can set it up in 2-4 hours. It will not satisfy the full provenance requirement -- social platforms strip file metadata on upload -- but it creates an internal audit trail that demonstrates compliance intent.

Where It Gets Harder

Everything above is housekeeping. The structural problems start when you try to build a system that actually satisfies the rules at scale.

The metadata stripping problem

The rules mandate permanent, irremovable provenance metadata. Every major social platform -- Instagram, LinkedIn, X, WhatsApp -- strips file metadata on upload. The IFF flagged this directly: metadata can be stripped by screenshotting; watermarks removed by recompression. The mandate requires embedding but cannot physically prevent stripping.

This means compliant content labeling requires a dual-track approach: metadata embedded in the file itself AND a visible label rendered into the content pixels. The metadata serves the audit trail. The visible label survives the platform’s upload pipeline.

The detection accuracy gap

Intermediaries must deploy detection systems audited every 3 months with at least 50% accuracy. How "accuracy" is measured is undefined in the rules. India’s government-backed C-DAC deepfake detection tool currently tops out at 89% accuracy (C-DAC published benchmarks, 2025). An 11% error rate at production scale means millions of content pieces incorrectly flagged or missed.

Meta’s own AI labeling system has a documented false positive problem -- real product photography gets tagged "AI Info" because residual Firefly or Photoshop metadata triggers the detection pipeline. If your product photos are getting incorrectly labeled as AI-generated, that is a brand trust problem with no clear resolution path today.

The no-standards problem

MeitY mandated provenance metadata but specified no technical standard. No C2PA requirement, no specific watermarking protocol, no reference implementation. The C2PA spec (version 2.3, maintained by Adobe, Microsoft, Google, BBC under the Linux Foundation) is the closest thing to an industry standard -- the open-source SDK is free, signing certificates run Rs 4,000-40,000/year -- but adopting it is a bet on a standard that India has not officially endorsed.

The workflow integration problem

A compliant content pipeline needs to intercept every AI-generated asset before publication, attach metadata, verify the metadata survived the publishing channel, log the provenance chain, and route flagged content through human review within the mandated response windows. The self-hosted n8n workflow for this is roughly nine nodes deep -- from AI generation trigger through ExifTool metadata attachment, Hive API detection verification, database logging, human approval gate, and conditional publish with audit trail.

The 3-hour takedown window means your grievance response system cannot be a founder checking email between meetings. It needs automated intake, instant acknowledgment, countdown timers, and escalation routing.

The Enforcement Picture

As of today, zero formal enforcement actions have been taken under the 2026 rules. MediaNama contacted Meta, Google, X, Snap, ShareChat, LinkedIn, OpenAI, Microsoft, Apple, Anthropic, and Midjourney -- none responded to compliance status queries (MediaNama, April 2026). The Data Protection Board of India has been constituted but its members have not been appointed.

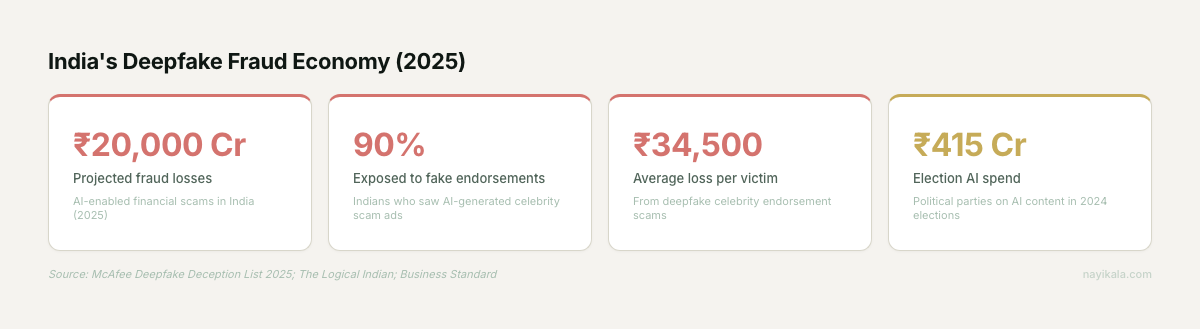

This will not last. India’s business regulation environment changed an estimated 9,420 times in 2024 -- an average of 36 regulatory changes per day (IndiaConnected, 2025 Regulatory Complexity Report). The enforcement infrastructure is being built. The Valueleaf case -- four employees arrested for knowingly running deepfake investment ads through Meta’s ecosystem, Rs 130 crore in ad credit exposure -- shows where criminal liability lands when synthetic content and commercial intent intersect.

The compliance infrastructure you build now is not for today’s enforcement. It is for the enforcement that arrives without a grace period, just like the rules themselves did.

The provenance pipeline design, the metadata persistence layer across platforms that strip it, and the sub-3-hour grievance routing architecture -- that is where the actual engineering decisions live.

← All posts